Working at Dreem: Stanislas, Researcher

Working at Dreem, research, neuroscience, PhDs welcome to the world of Stanislas Chambon. A Ph.D. student working in Dreem’s scientific research team, Stanislas is currently finishing his last year of a thesis on the problems of machine learning applied to the EEG (or encephalogram, or the measure of electric activity in the brain).

At Dreem, his essential research for the development and improvement of the headband has recently been published: Chambon, S., Galtier, M., Arnal, P., Wainrib, G., & Gramfort, A. (2017). A deep learning architecture for temporal sleep stage classification using multivariate and multimodal time series.

To better understand the issues in this publication, in Dreem team: Stanislas, we asked Stanislas a few questions:

Hello Stanislas, can you tell us a little about your background?

I discovered machine learning in 2015, while I was studying a double degree in mathematics at Technische Universität München (TUM) and École Polytechnique. Attracted by this field, I did an internship at Dreem where I had the opportunity to apply my knowledge in machine learning. Once I finished my master’s degree, I returned to Paris to start a thesis under the direction of Alexandre Gramfort and to take a job at Dreem. Focusing on deep learning, my research aims to solve the problem of the lack of annotated data thanks to transferring learning, with the aim of improving the algorithms.

Could you explain to us what were the objectives of this study? The hypotheses?

To understand the context of this research, it must first be known that a traditional study of sleep is typically done in a hospital environment. The patient wears a device that records the electrical activity of his brain during the night: electroencephalogram (EEG), electrical activity in the eyes, electrooculogram (EOG) and electrical activity of muscles (chin), electromyogram (EMG).

The recorded signals are then analyzed by a medical expert. On criteria defined according to a reference nomenclature (AASM rule), the latter assigns a sleep stage for each 30-second period:

● awakening

● light sleep (L1) and (L2)

● REM sleep (SP)

● deep sleep (N3)

This is a repetitive and time-consuming task. Indeed, every the 30s of a signal must be analyzed, and it is dependent on the expert’s subjectivity.

Stanislas, how do you manage to make this task less repetitive and time-consuming?

An alternative becoming more popular is to have a computer perform this task: this is automatic classification. A machine learning algorithm can perform the task in a way that helps the expert in their diagnostics or replaces it. The goal of the game is then to produce the best possible algorithm i.e. It best reproduces the doctor’s decision or a doctor’s consensus.

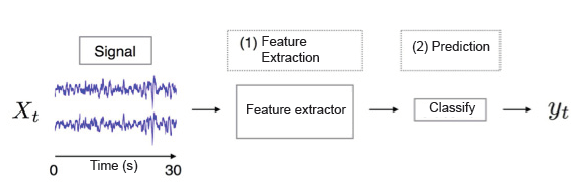

This is based on the principle of machine learning. Machine learning is conducted in two stages:

(1) the first is to extract some features of the raw signal and to create a new representation of the signal.

(2) the extracted features are then given to an algorithm that is trained to perform the task of classification of stages of sleep.” cf. Figure 1.

How can machine learning approaches be categorized?

Machines learning approaches can be categorized into two sub-categories:

(a) traditional approaches. They are based on the extraction of expert characteristics (average signal type, standard deviation …) based on prior knowledge of the signal.

(b) deep learning approaches. They are based on particular algorithms. First, “Neural networks” which perform (1) the extraction of characteristics and (2) the classification in one and the same procedure. These algorithms are particularly interesting. Indeed, they make it possible to obtain a representation of the signal which is much richer than traditional machine learning. It is also much more appropriate to the task to be performed which makes them extremely efficient on many tasks (image recognition, speech recognition…)

Figure 1: the principle of machine learning.

Figure 1: the principle of machine learning.

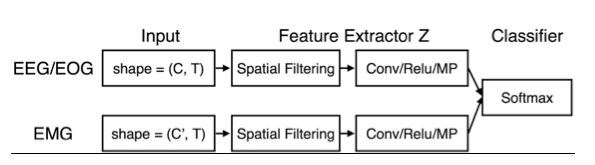

This study focuses on the automatic classification of sleep stage from a deep learning method. It offers a simple architecture that integrates information from different EEG, EOG and EMG sensors. (cf Figure 2)

Figure 2: Architecture of the algorithm integrating the signals of the EEG, EOG and EMG sensors

Figure 2: Architecture of the algorithm integrating the signals of the EEG, EOG and EMG sensors

What is this study aiming at?

This study aims to analyze the influence of different factors on the performance of the method:

a. the number of electrodes (EEG)

b. the inclusion of electrodes data (EOG) and (EMG)

c. it is the influence of the temporal context* of a 30-second sample to classify.

d. the amount of data needed to drive a classification algorithm

*Temporal context: a sleep expert assigns a sleep stage to a sample of the 30s based on the 30s he/she sees but also on the decisions he/she made about previous samples (in the night). What he/she saw previously influences his / her decision and has to be taken into account in some way by the algorithm.

Stanislas, what previous research did you rely on?

We mainly relied on a lot of research tackling the automatic classification of the stage of sleep with deep learning methods. But we relied also on works dealing with EEG signal processing with deep learning algorithms in general.

What procedures have been put in place?

For this job, we had to

a. collect and extract EEG data and annotations

b. choose metrics to quantify the performance of the studied algorithms

c. re-implement state-of-the-art methods to establish a benchmark.

These methods have been re-implemented from various scientific articles and research*.

What results did you expect to discover? Do the results obtained validate your initial hypotheses?

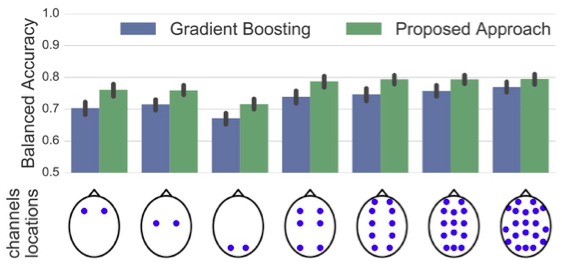

We have been able to show that working with many electrodes does not significantly influence the performance of the algorithm. A small number of well-placed electrodes allows the algorithm to obtain good performances, cf Figure 3.

Figure 3: Influence of the number of EEG electrodes taken into account by the proposed algorithm and a traditional machine learning algorithm.

Figure 3: Influence of the number of EEG electrodes taken into account by the proposed algorithm and a traditional machine learning algorithm.

In the same way, the influence of the temporal context on the performance of the algorithm seems to be reduced when a certain number of signals are considered (EEG, EOG, EMG). The information “lost” with a small number of electrodes can be compensated by the temporal context and vice versa. This highlights several action levers on which the experimenter can play such as:

● the lack of spatial information (few EEG channels) can be compensated by taking into account the temporal context of the sample to be classified

● and conversely: more spatial information compensates for the lack of information on the temporal context.

Stanislas, can this study help develop the Dreem headband?

This makes it possible to set up procedures to quantify the impact of the number of electrodes, their position, the incorporation of additional modalities (EOG, EMG …). It makes also possible to know the various levels on which we can play on the design of the headband, or of some algorithms embedded in the headband.

What are the next milestones? The next ongoing research?

The next step focuses on the generalization of this type of algorithm to data from other cohorts or collected with other devices. We are developing methods that allow sleep stage classification algorithms to work better on highly variable data.

In addition, we are interested in the detection of patterns of interest (patterns) in the EEG from deep learning method. This problem is more complex. The researched patterns are indeed multiple, of variable duration and can appear several times during the night. However, this problem is also more interesting. It provides indeed access to more accurate information on the night of a sleeper and the sleeper himself.

What do you plan to do after your thesis?

I am very sensitive to the issues surrounding sleep. I intend to continue in this area. How? By focusing more on the industrial side of machine learning to address more medical issues. It can be for example the detection of certain pathologies.

Working at Dreem, research… what about you?

If now you’ve had a look behind the scenes at Dreem, you want to find out more about working at Dreem, take a look at our Careers page and get in touch!

Discover your sleeper profile with this sleep test

Start